As part of my contribution to framing the challenge, I shared some opening remarks about the disappointment and guilt I feel watching the world today – not just the state of tech, but also the commercial social media has created so much harm – as somebody who advocated strongly for social tools and social media in the pre-Facebook days just after the turn of the new century.

Those of us who believed social and digital technology could usher in a period of inclusion, participation and democratic debate failed to predict the toxicity of Brexit, Trump, Pepe the frog, state-run troll farms, the rise of the far right and other dystopian visions such as China’s ‘social score’ initiative. We lived in a world of blogger meetups, respectful debate and accountability for our actions, since the community of early users was small and knowable. Now, I think it is fair to say that technology is breaking or distorting our institutions and norms, but not yet replacing or improving them – we are destroying the past, but not yet building the future. We have witnessed the development of some amazing superpowers of human connectivity, but we failed to predict how bad people would use good technology.

So what went wrong?

In my opinion, and with the obvious benefit of hindsight, I think two key terms are scale and trust. People tend to be much nicer to each other within the context of some form of social network connection, but when freed from this constraint, the phenomenon of anonymous cowards and trolls tends to take over. We often think in terms of three basic levels of scale and intimacy that people are used to, and each has its own feedback mechanisms to regulate behaviour; but public social media often collapses these into an imagined global public realm in pursuit of big numbers, and the effects can be very damaging:

- small teams / families, where cooperation is essential and unavoidable (army squads, sports teams, startup teams, etc)

- communities / collaborative groups roughly up to Dunbar’s number in size, where social network dynamics disincentivise bad behaviour

- social networks or interest groups where loose ties are the norm and reputation or identity act as regulators

Throughout history, the phenomenon of ‘othering’ or the Roman legal notion of ‘homo sacer’ has often been a precursor to bad, inhuman behaviour, and this is much easier to achieve outside of the common bonds or commitments of a real-world social network. As an act of self-flagellation, I often read ‘below the line’ comments on newspapers like the Daily Telegraph, or lurk in contentious Twitter debates, and I am appalled by how the pseudo-anonymity of the open internet makes people do and say things they would never contemplate in real life, if the targets of their ire were in right front of them. It sometimes makes me feel the world is full of terrible people, but of course the reality is that a small minority of attention seekers with too much time on their hands – e.g. those who want to repeat ‘remoaners lost – get over it’ (UK) or celebrate ‘libtard butthurt’ (USA) ad nauseam without ever providing constructive solutions – and this has contributed to a western political crisis where everybody seems to be virulently against something, but nobody seems to be doing the hard work of coming up with constructive ideas or solutions. It’s like everybody has a megaphone, but nobody has a pencil.

Why did this happen? One obvious reason is that tech companies relied on advertising metrics to value communities and social networks, and therefore pursued quantity and scale rather than quality and outcomes – chasing likes and shares as the route to clickbait and fake news. The quest for scale has produced fragmentation in social networks and even sall-scale human relationships, es evidenced by Twitter ‘pile ons’ that can render people as outcasts for pretty minor mis-steps or infractions. Facebook and Twitter are not ‘communities’ – perhaps they are closer to being people farms, as critics such as Aral Balkan have long argued.

Also, in seeking big numbers and scale, we seem to have created lots of feedback mechanisms that amplify small things (“my funny tweet went viral!”), but very few dampeners to do the opposite (“sorry my stats were wrong, please recall all the re-tweets”).

Inter-personal trust is imperfect, but it is highly evolved and vitally important. Yes, we might sometimes place our trust in proxies such as states, flags, religions or even sometimes ‘brands’, but mostly these are aggregates of human relationships and values. The current techno-fantasist idea of replacing human-scale organisations with ‘trustless’ systems that rely on social reputation metrics and distributed ledger (blockchain) technology to create shared ‘truth’ ignores a great deal about what we know of human psychology and motivation, with the result that these systems have become magnets for exploitative hackers, scammers and people seeking a quick buck. And yet the opposite is also possible. We can use technology to scale human trust and to connect groups and networks, rather than seek to obviate the need for trust through (currently very primitive) code. There are good reasons why law is messy and open to interpretation (unlike code, law is painfully aware of its bugs and limitations), and there are good reasons why the professions and guilds have acted as custodians of standards and ethics in business for the past couple of centuries. We might want to exercise caution before we automate their roles entirely. In general, we should try to balance the eagerness of technologists to build a new world in their own image with the knowledge that others, such as social scientists or health professionals, have about the human condition. I sometimes find it ironic that those most empowered to change the world are often those who understand it (and feel comfortable within it) the least.

In the era of mass communications and TV democracy, people were often infantilised and treated like herded cattle by politicians in search of a vote or companies in search of customers. They were told their vote counted or opinion mattered when it did not, and perhaps this bred a sense that they could make cost-free choices without worrying about the consequences, such as demanding Scandinavian-style welfare with US-style low taxes, or the convenience of ready meals but with the health-giving qualities of raw ingredients. In the UK, those who were against the EU are now like the proverbial dog that caught the car – they have no idea what to do next; in Germany, the AfD boast of offering an alternative approach, and yet react with incredulity and cluelessness when asked about any policy outside their anti-immigration agenda.

Perhaps we can only really improve things if we all take responsibility for our words and accountability for our actions, rather than accepting trolling and fake news as facts of life and then expecting platforms that rely on volume to effectively police half the planet. There are very few cost-free choices in life, and some of the better ideas for advancing democratic participation, such as participatory budgeting, are based on trying to encourage more ‘skin in the game’ so that people take responsibility for the decisions and trade-offs they make.

A good place to start, in my view, is to encourage technologists, designers, entrepreneurs and all others involved in this new ecosystem to take responsibility for the long-term and possibly unintended consequences of their innovations and inventions, and the way they work.

As a starting point for guiding my own work, I will try to:

- First do no harm. Consider the longer-term consequences of design decisions.

- Start with human scale trust and build/grow from there. Stay small unless you need to grow big.

- Encourage trustful not trustless systems.

- Always have skin in the game, and encourage the same for partners and customers.

- Codify positive human values in the lowest-level services we build and use.

- Pursue algorithmic transparency.

- Use smart tech and AI platforms to elevate and augment the human, not replace us.

- Understand the impact of new affordances on our minds and mental health.

- Avoid manipulative tricks / clickbait that exploit on our lower level brain functions.

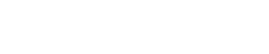

In Copenhagen, I struggled to encapsulate my own thinking on this topic into some pithy principles that would be clear and easy to understand (oh the complexity!), but thankfully others did a better job 🙂, which has resulted in an excellent and thought-provoking starter-pack of #techprinciples for us to consider. I am delighted that something like the #CopenhagenCatalog exists. Let us see if we can popularise some of these principles in our work.

If you agree, please sign up to some of the principles here, share them as widely as you can and encourage anybody who has a stake in building the future to consider these (and other) principles in their work: https://www.copenhagencatalog.org.